PRD Generator Prompts: 8 AI Prompt Templates for Product Managers

Create better PRDs faster with AI. Explore 8 proven AI PRD prompt templates product managers actually use—plus how to automate PRD generation with Kuse.

Product managers don’t struggle because they lack ideas. They struggle because translating messy inputs—research notes, stakeholder feedback, strategy decks—into clear, execution-ready documents is slow, fragmented, and mentally expensive.

That’s why AI PRD prompts are becoming a core part of modern product workflows.

Used well, AI doesn’t replace product thinking. It accelerates the structuring of that thinking—turning scattered context into drafts, outlines, and decision-ready artifacts. The difference between weak and powerful results often comes down to one thing: the quality of the prompt.

This guide explains what a PRD is, what makes an effective AI PRD prompt, and provides 8 reusable AI prompt templates covering the most common product management outputs—from PRDs and competitive analysis to launch planning and iteration. You’ll also see how teams automate these workflows inside Kuse for continuity across the product lifecycle.

What Is a PRD?

A Product Requirements Document (PRD) defines what a product should do, for whom, and why. It aligns product, design, engineering, and stakeholders around a shared understanding of scope, constraints, and success.

A strong PRD typically includes:

- Problem definition and context

- Target users and use cases

- Goals and success metrics

- Functional and non-functional requirements

- Assumptions, constraints, and dependencies

- Open questions and risks

In modern product teams, PRDs are rarely static. They evolve continuously as new insights, tradeoffs, and feedback emerge—which makes AI particularly valuable when used as a structuring assistant, not a replacement author.

What Makes a Successful AI PRD Prompt?

Most failed AI-generated PRDs don’t fail because the model is weak—they fail because the prompt does not encode product thinking.

A successful AI PRD prompt works because it translates how product managers think into instructions the model can follow. In practice, strong prompts share several critical elements that go far beyond “write a PRD.”

1. Clear Product Context (Not Just a Topic)

AI performs poorly when it lacks situational grounding. Simply stating “write a PRD for a task management app” produces generic output because the model has no sense of why this product exists or what problem it serves.

Effective prompts provide context such as:

- Product stage (early discovery, iteration, scaling)

- Target users and environment

- Market or organizational constraints

- Strategic intent behind the document

This context helps AI distinguish between exploration and execution documents and prevents overly confident but misaligned requirements.

2. Explicit Decision Purpose

PRDs serve different purposes at different moments:

- Alignment across teams

- Validation of scope

- Execution guidance

- Stakeholder sign-off

Strong prompts explicitly state what the PRD is for. This shapes tone, depth, and structure. A PRD meant for early alignment should emphasize assumptions and open questions, while one meant for execution should prioritize clarity and edge cases.

Without this signal, AI tends to default to a one-size-fits-all spec.

3. Constraints That Shape Tradeoffs

Real product work is defined by constraints—technical limits, timelines, regulatory requirements, dependencies, and organizational realities.

Including constraints in a prompt does two things:

- It prevents AI from proposing unrealistic or over-scoped solutions

- It forces the output to reflect tradeoffs, not idealized designs

Well-crafted prompts treat constraints as first-class inputs, not afterthoughts.

4. Structured Output Expectations

AI is far more effective when it knows how to organize information.

Prompts that specify section structure (e.g. Overview → Users → Requirements → Risks) consistently outperform free-form prompts. This mirrors how PMs think: structure first, detail second.

Importantly, structure also makes outputs easier to review, edit, and reuse across teams.

5. Role Awareness

Strong prompts implicitly define the audience: product, engineering, design, leadership, or cross-functional stakeholders.

When a prompt encodes role expectations, AI adjusts language, depth, and emphasis—reducing the gap between “AI draft” and “usable internal document.”

8 Product Management Functions AI Can Support (With Prompt Templates)

1. PRD Iteration & Refinement

Typical PM scenario

A PRD exists, but everyone senses it’s “not quite right”—unclear sections, missing assumptions, or hidden risks.

Prompt template:

“Using the following context, generate a structured Product Requirements Document. Product background: [describe product, users, and market] Problem statement: [key problem] Goals: [business + user goals] Constraints: [technical, timeline, regulatory] Please structure the PRD with: Overview, User Personas, Problem Definition, Goals & Metrics, Functional Requirements, Non-Functional Requirements, Assumptions, Risks, and Open Questions.”

Why this prompt works

The prompt anchors AI in:

Real product context

Explicit goals and constraints

A clear PRD structure

This prevents generic output and turns AI into a drafting accelerator, not a decision-maker.

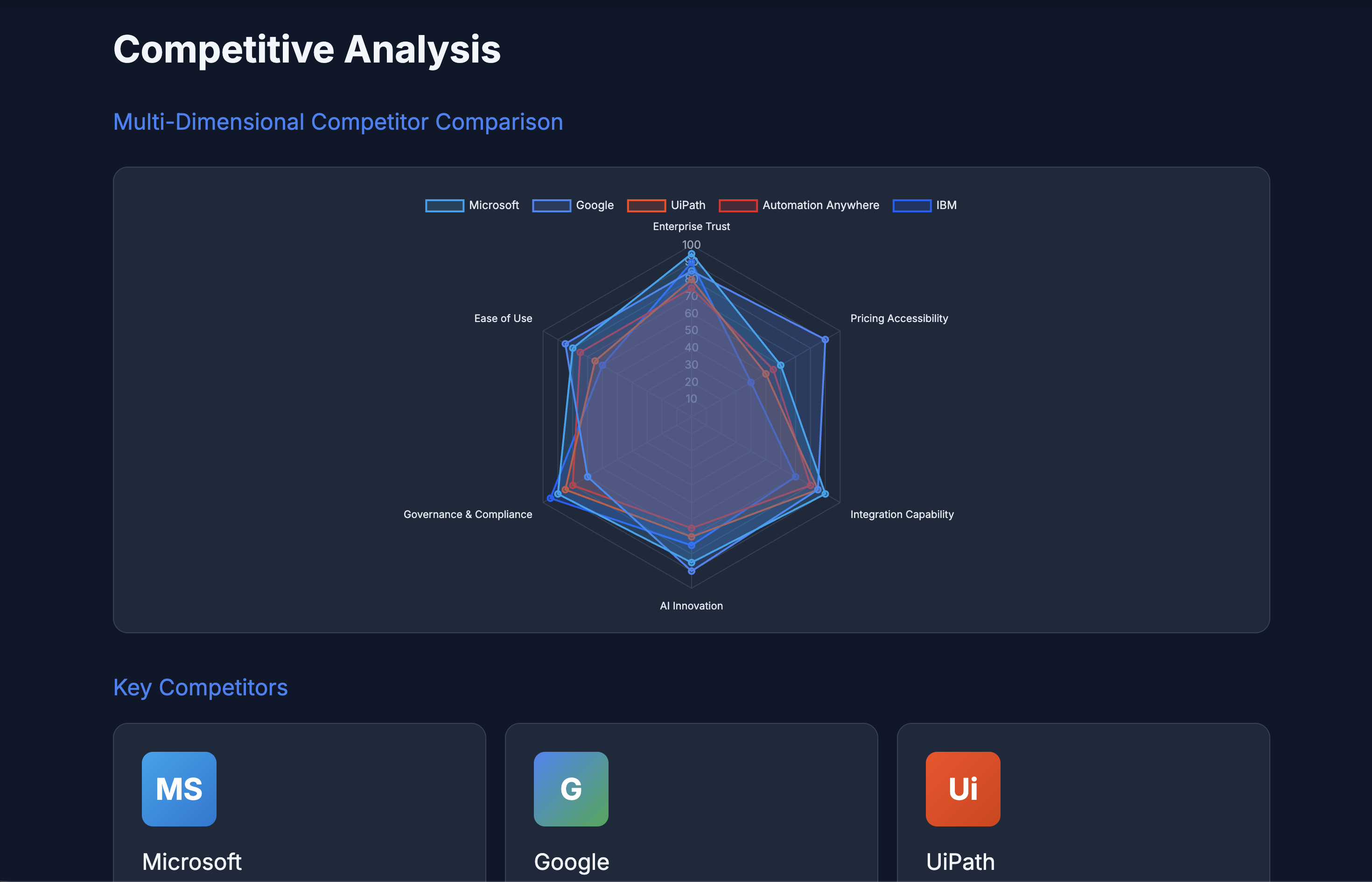

2. Competitive Analysis Draft

Typical PM scenario

Before roadmap prioritization, stakeholders ask: “How are competitors solving this?” You have scattered notes, links, and opinions—but no clean synthesis.

Prompt template:

“Analyze the competitive landscape for [product/category]. Compare at least 3 competitors across positioning, core features, pricing model, strengths, weaknesses, and differentiation opportunities. Summarize implications for product strategy and unmet opportunities.”

Why this prompt works

It directs AI to:

Compare across consistent dimensions

Move beyond feature lists into strategic implications

Frame outputs for decision-making, not reporting

The result is insight-oriented analysis, not a data dump.

3. User Problem & Opportunity Framing

Typical PM scenario

You’ve collected dozens of user quotes and tickets. Patterns are emerging—but stakeholders disagree on which problems actually matter.

Prompt template:

“Based on these user insights [paste notes], synthesize the core user problems. Group them by severity, frequency, and strategic importance. Identify which problems represent near-term vs long-term product opportunities.”

Why this prompt worksIt forces AI to:

Group problems meaningfully

Rank by impact and frequency

Distinguish tactical issues from strategic opportunities

This mirrors how experienced PMs frame problem spaces.

4. Feature Scope Definition

Typical PM scenario

A feature idea has momentum, but scope creep is already happening. Engineering asks for clarity; stakeholders keep adding “just one more thing.”

Prompt template:

“Define the feature scope for [feature name]. Include: user story, functional requirements, edge cases, non-goals, and success criteria. Assume this feature must ship within [timeframe] and integrate with [systems].”

Why this prompt works

By explicitly requesting non-goals and edge cases, the prompt:

Prevents silent assumptions

Makes tradeoffs visible

Produces a scope artifact teams can align around

This reduces downstream friction.

5. Metrics & Success Criteria Definition

Typical PM scenario

A feature ships, but weeks later the team debates whether it was “successful.”

Prompt template:

“Define the feature scope for [feature name]. Include: user story, functional requirements, edge cases, non-goals, and success criteria. Assume this feature must ship within [timeframe] and integrate with [systems].”

Why this prompt works

It forces differentiation between:

What teams do

What users experience

What outcomes actually matter

This aligns measurement with product intent.

6. Launch Readiness & GTM Alignment

Typical PM scenario

Product, marketing, sales, and support are preparing for launch—but everyone has a slightly different understanding of what’s shipping.

Prompt template:

“Create a launch readiness checklist for [product/feature]. Include product scope validation, messaging alignment, sales enablement needs, support readiness, and known risks. Highlight any dependencies or unresolved assumptions.”

Why this prompt works

It frames launch readiness as a system—not a checklist—surfacing gaps between promise and reality before customers do.

7. Post-Launch Feedback Synthesis

Typical PM scenario

After launch, feedback floods in—but insights remain fragmented across tools and conversations.

Prompt template:

“Analyze the following post-launch feedback [paste data]. Identify recurring themes, root causes, and priority issues. Map each theme back to original assumptions or requirements.”

Why this prompt works

It explicitly links feedback to earlier assumptions and requirements, turning feedback into learning rather than noise.

8. PRD Iteration & Refinement

Typical PM scenario

A PRD exists, but everyone senses it’s “not quite right”—unclear sections, missing assumptions, or hidden risks.

Prompt template:

“Review this PRD and suggest improvements based on clarity, completeness, and risk. Identify missing assumptions, unclear requirements, and areas likely to cause implementation confusion.”

Why this prompt works

It asks AI to critique structure and logic, not rewrite content blindly—making it a second-pass thinking partner.

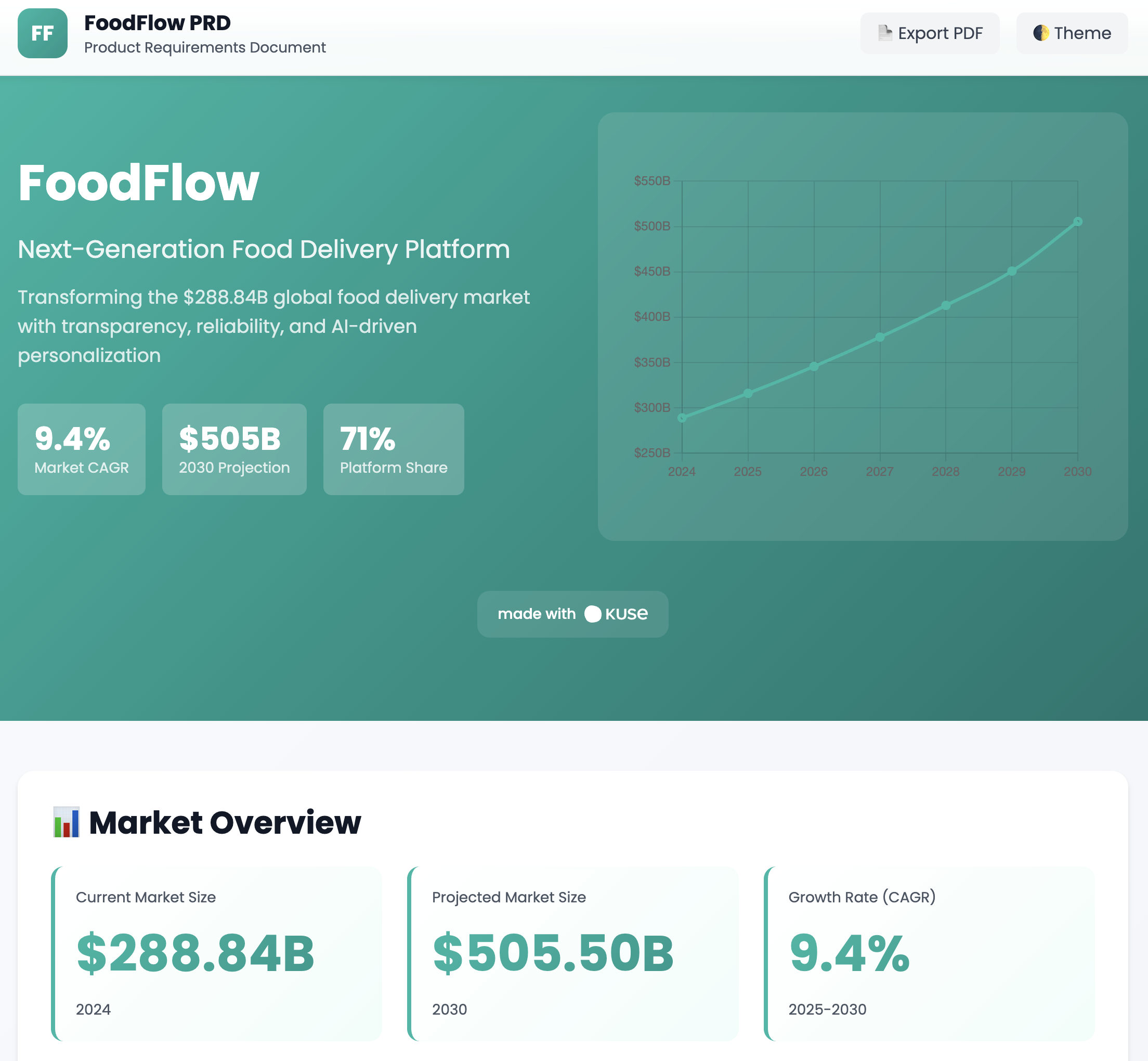

How to Automate AI PRD Prompts in Kuse

The real power of AI PRD prompts emerges when they’re embedded in a persistent product workspace, not used as one-off chat interactions.

In Kuse, teams typically follow this workflow:

Step 1: Centralize Context

Upload discovery notes, research docs, stakeholder feedback, prior PRDs, and roadmap materials into one project space.

Step 2: Apply Prompt Templates

Use the prompt templates above directly against all relevant context at once, instead of copying fragments into multiple tools.

Step 3: Generate Structured Outputs

Kuse produces PRDs, analyses, and summaries that stay connected to source materials—making assumptions traceable.

Step 4: Iterate Without Context Loss

As decisions change, regenerate or refine outputs without starting over. Every version builds on accumulated knowledge.

This turns AI prompts from shortcuts into lifecycle assets.

Conclusion

AI PRD prompts are not about writing faster—they’re about thinking more clearly under complexity.

When product managers encode their reasoning into structured prompts, AI becomes a multiplier: accelerating alignment, reducing cognitive overhead, and preserving context across the product lifecycle.

The teams that win with AI won’t be those who generate the most documents—but those who build repeatable, prompt-driven workflows that evolve alongside their products.